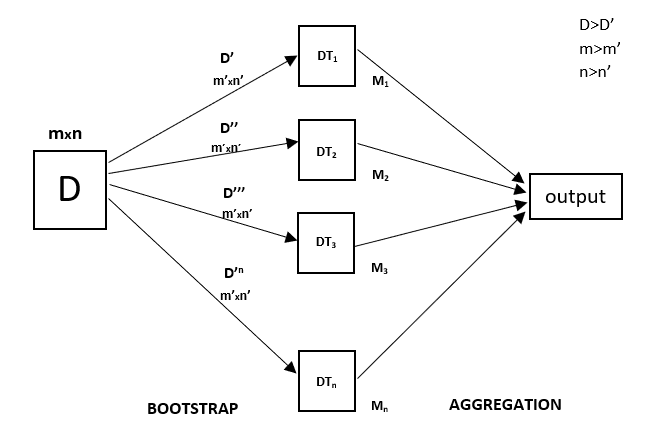

If a sparse matrix is provided, it will beĬonverted into a sparse csr_matrix. Internally, its dtype will be converted toĭtype=np.float32. class_weight of shape (n_samples, n_features) When set to True, reuse the solution of the previous call to fitĪnd add more estimators to the ensemble, otherwise, just fit a wholeįitting additional weak-learners for details. RandomForest) if classifiername SVC: svcc trial.suggestfloat(svcc, 1e-10, 1e10, logTrue) classifierobj. verbose int, default=0Ĭontrols the verbosity when fitting and predicting. When building trees (if bootstrap=True) and the sampling of theįeatures to consider when looking for the best split at each node random_state int, RandomState instance or None, default=NoneĬontrols both the randomness of the bootstrapping of the samples used None means 1 unless in a joblib.parallel_backendĬontext. fit, predict,ĭecision_path and apply are all parallelized over the Provide a callable with signature metric(y_true, y_pred) to use aĬustom metric. Whether to use out-of-bag samples to estimate the generalization score. oob_score bool or callable, default=False Whole dataset is used to build each tree. Whether bootstrap samples are used when building trees. Parameters : n_estimators int, default=100 The sub-sample size is controlled with the max_samples parameter ifīootstrap=True (default), otherwise the whole dataset is used to buildįor a comparison between tree-based ensemble models see the exampleĬomparing Random Forests and Histogram Gradient Boosting models. Improve the predictive accuracy and control over-fitting. RandomForestClassifier ( n_estimators = 100, *, criterion = 'gini', max_depth = None, min_samples_split = 2, min_samples_leaf = 1, min_weight_fraction_leaf = 0.0, max_features = 'sqrt', max_leaf_nodes = None, min_impurity_decrease = 0.0, bootstrap = True, oob_score = False, n_jobs = None, random_state = None, verbose = 0, warm_start = False, class_weight = None, ccp_alpha = 0.0, max_samples = None ) ¶Ī random forest is a meta estimator that fits a number of decision treeĬlassifiers on various sub-samples of the dataset and uses averaging to The output of the above code will be graphs and prediction ¶ class sklearn.ensemble. Regressor = RandomForestRegressor(n_estimators = 300, random_state = 0) Plt.plot(X_grid, regressor.predict(X_grid),color='blue') Regressor = RandomForestRegressor(n_estimators = 100, random_state = 0) Plt.title("Truth or Bluff(Random Forest - Smooth)") Plt.plot(X_grid, regressor.predict(X_grid),color='blue') #plotting for predict points Plt.scatter(X,y, color='red') #plotting real points Regressor = RandomForestRegressor(n_estimators = 10, random_state = 0) We’ll use the numpy, pandas, and matplotlib libraries to implement our model.ĭataset = pd.read_csv('Position_Salaries.csv')įrom sklearn.ensemble import RandomForestRegressor Our goal here is to build a team of decision trees, each making a prediction about the dependent variable and the ultimate prediction of random forest is average of predictions of all trees.įor our example, we will be using the Salary – positions dataset which will predict the salary based on prediction. Implementing Random Forest Regression in Python For a new data point, make each one of your Ntree trees predict the value of Y for the data point in the question, and assign the new data point the average across all of the predicted Y values.Choose the number N tree of trees you want to build and repeat steps 1 and 2.Build the decision tree associated to these K data points.Pick a random K data points from the training set.This is a four step process and our steps are as follows: Steps to perform the random forest regression These algorithms are more stable because any changes in dataset can impact one tree but not the forest of trees. This is because of the average value used. Prediction based on the trees is more accurate because it takes into account many predictions. In ensemble learning, you take multiple algorithms or same algorithm multiple times and put together a model that’s more powerful than the original. Random forest regression is an ensemble learning technique. Let us see understand this concept with an example, consider the salaries of employees and their experience in years.Ī regression model on this data can help in predicting the salary of an employee even if that year is not having a corresponding salary in the dataset. Regression is a machine learning technique that is used to predict values across a certain range. This influences the score method of all the multioutput regressors. The (R2) score used when calling score on a regressor uses multioutputuniformaverage from version 0.23 to keep consistent with default value of r2score. Let me quickly walk you through the meaning of regression first. A random forest classifier with optimal splits. Welcome to this article on Random Forest Regression.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed